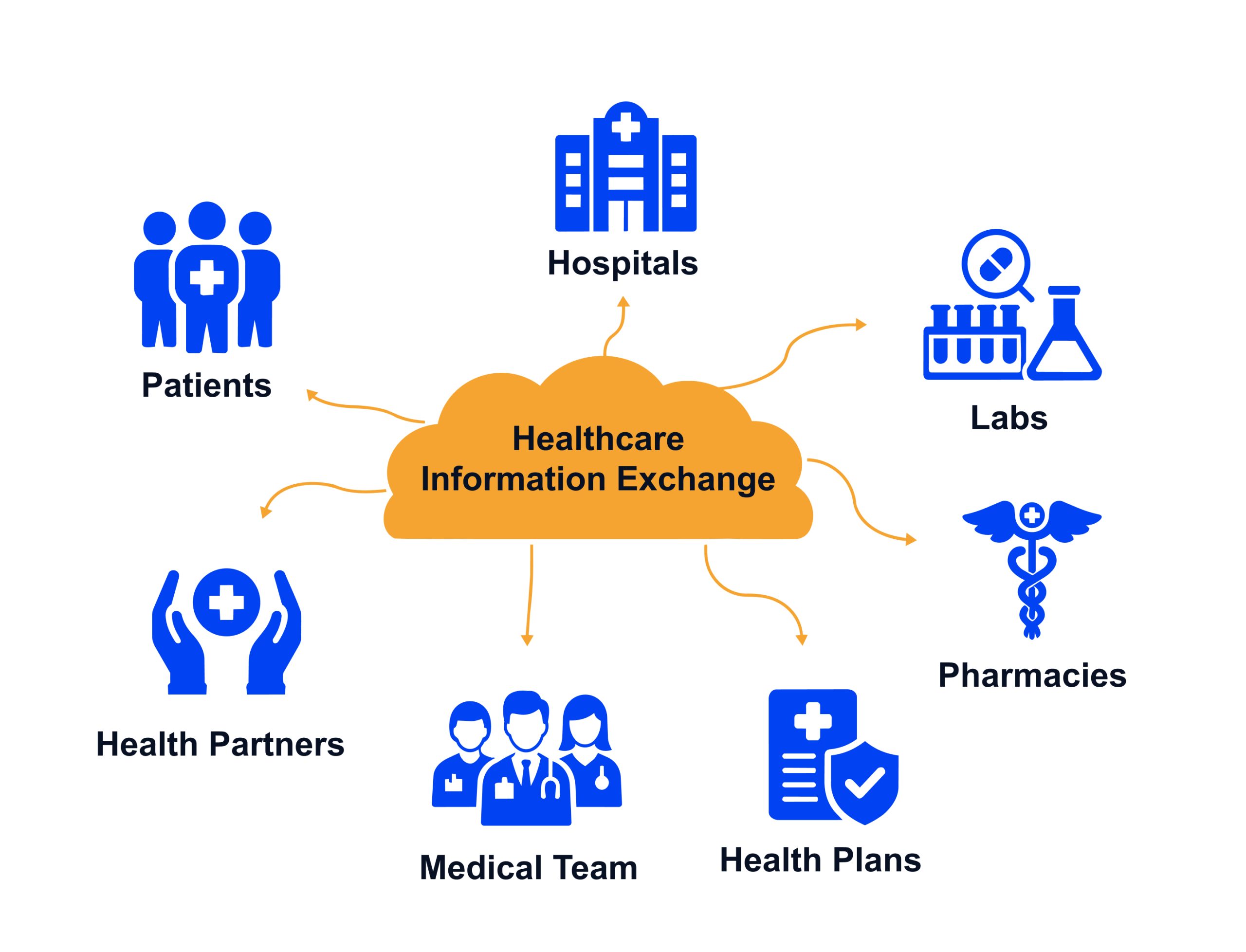

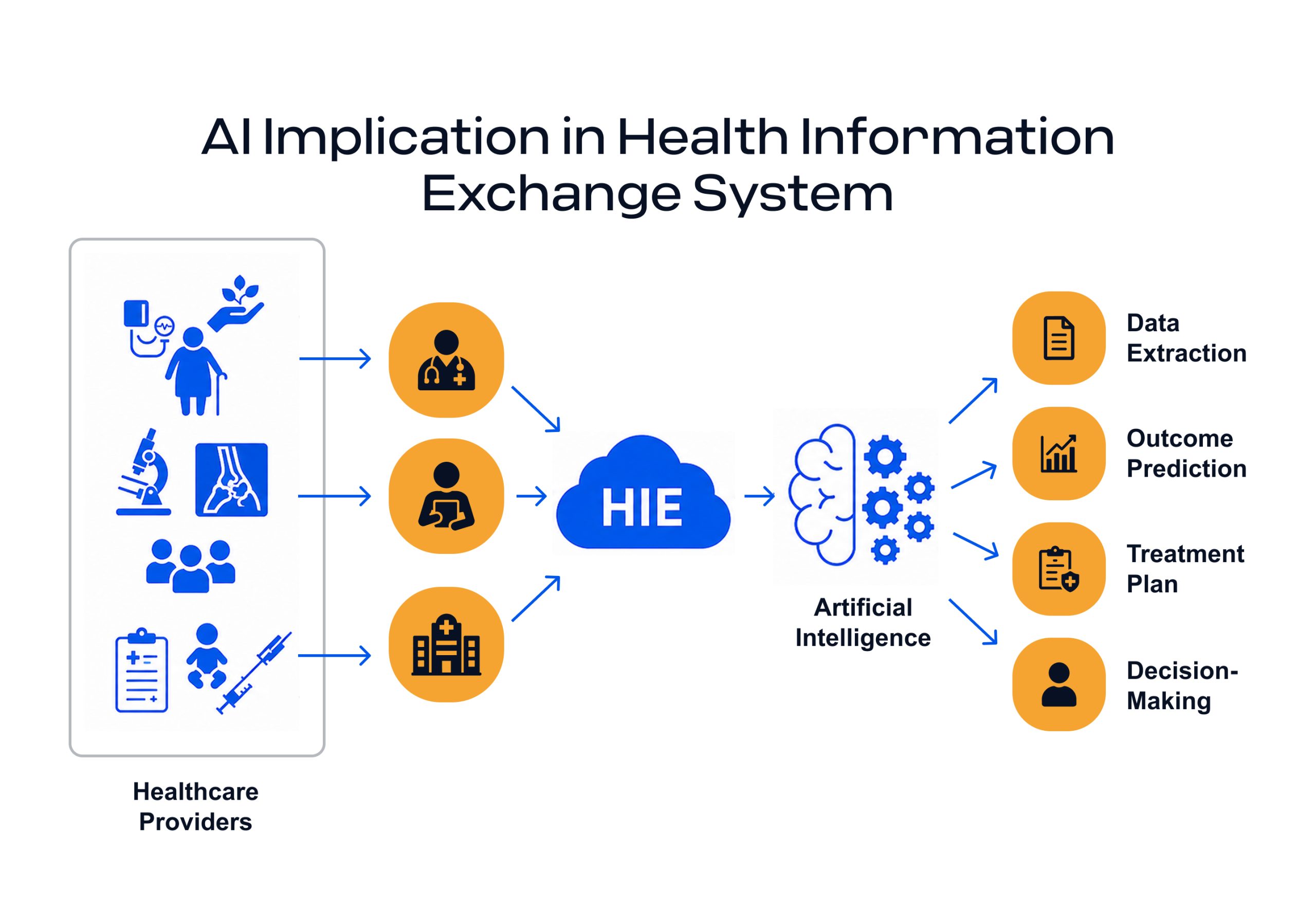

Healthcare is entering a new era, driven by artificial intelligence in health information exchange. Artificial intelligence (AI), particularly modern machine learning and large language models, is revolutionizing how health data is integrated, normalized, and shared across organizations.

Traditional extract-transform-load (ETL) processes that once required rigid schemas and extensive manual mapping are being augmented (and in some cases replaced) by AI-driven pipelines, enabling healthcare information exchange with AI at enterprise scale.

These intelligent systems can interpret unfamiliar data formats on the fly, fill in data gaps, and continuously learn to improve data quality, which is why many leaders now view AI in healthcare information exchange as a practical interoperability lever rather than a future concept.

For healthcare CIOs, CMIOs, and IT leaders, the implications are profound: faster onboarding of new data sources, more complete patient information flow, and more resilient interoperability, all while navigating strict privacy and regulatory requirements in AI in HIE systems. This article provides a comprehensive overview of how AI is transforming HIE, addressing technical advances and practical considerations for leadership.

AI Augmenting Traditional HIE Data Pipelines

In classic HIE implementations, data integration has relied on predefined mappings and ETL/ELT pipelines. Each new data source (an EHR feed, a lab interface, etc.) required careful extraction of fields, transformation into standard formats, and loading into a repository (in batch processing or real-time)—often a slow, manual process.

Intelligent models are now supercharging this pipeline by using AI in health information systems to automate interpretation and transformation. Historically, this conversion was complex and error-prone, frequently causing delays or even data omissions.

The AI system can ingest the data as is and automatically standardize it to the required schema before loading it into the target system, advancing smart health information exchange systems and intelligent health information exchange systems in real-world operations.

The AI system can ingest the data as is and automatically standardize it to the required schema before loading it into the target system. This reduces the burden on IT teams and encourages greater participation in data sharing by organizations that previously struggled with format mismatches.

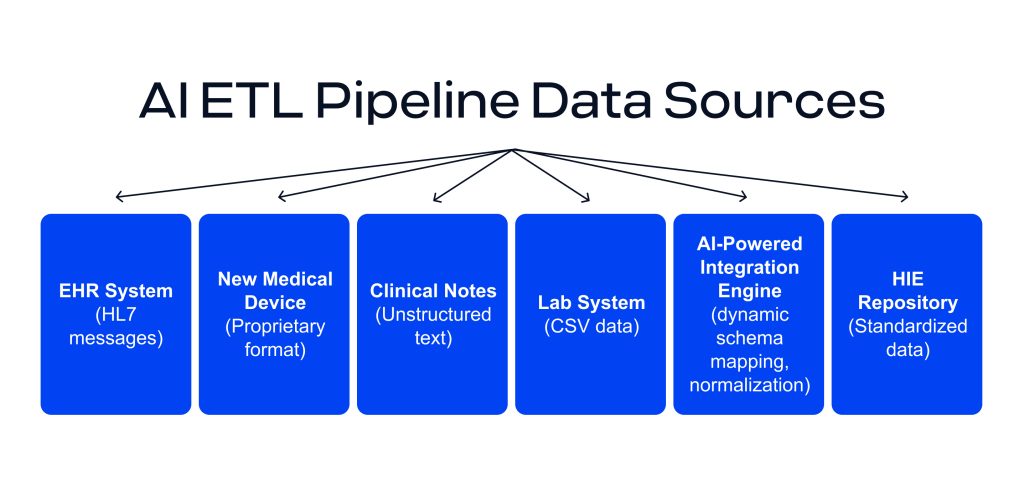

An AI-enhanced ETL pipeline can ingest diverse healthcare data sources, from HL7 messages to device outputs and unstructured notes, and dynamically standardize them into a common format for HIE. The AI-driven engine detects schemas, transforms data (e.g., to FHIR® or other standards), and applies normalization in real time, reducing the need for manual reformatting.

Such AI-augmented pipelines go beyond linear mappings. They can interpret context and content, not just field-to-field rules. For instance, large language models (LLMs) like GPT-4 or Google’s FLAN can take arbitrary input (documents, JSON, HL7 messages) and generate output in a target format through natural language instructions or fine-tuning, serving as AI models in health data exchange when deployed in production pipelines.

This means an HIE can leverage an LLM to convert a novel data feed into standardized HL7 FHIR resources without waiting for a human to program the transformation, illustrating AI for health data exchange in the integration layer. One real-world study demonstrated an LLM-based system (“FHIR-GPT”) converting unstructured clinical text into HL7 FHIR with over 90% exact-match accuracy, outperforming traditional multi-tool NLP pipelines.

In practice, this allowed parsing of medication statements from free-text notes with precision, showing that AI can reliably unravel complex healthcare data into a standardized form. Such capabilities hint that AI-driven ETL might soon handle tasks that used to require months of custom development, accelerating time-to-integration dramatically.

Dynamic Transformation and Real-Time Schema Adaptation

A powerful advantage of AI in HIE is the ability to handle non-linear data transformations and on-the-fly schema adaptation. Traditional ETL assumes a known source schema and target schema upfront. But healthcare data is notorious for variability—new fields get added, formats change, devices output unexpected structures.

AI can thrive in this variability by detecting and adapting to schema changes in real time, improving AI in clinical data exchange, where timeliness and consistency matter. AI-driven tools can monitor data streams and spot subtle schema drifts or new data fields automatically.

Continuous schema learning helps HIE integration become less brittle and more resilient to unexpected format changes.

Generative AI can infer structure from context and support near real-time schema generation for unfamiliar formats. For example, if a new medical device outputs data in a proprietary format, AI can analyze sample records, identify the content, and suggest a mapping or transformation into the HIE’s standard schema. In essence, the AI is writing the ETL rules dynamically, using AI models in healthcare to reduce the integration bottleneck.

Research from the data engineering domain supports this concept; LLMs can auto-generate transformation rules based on data characteristics and even automatically correct inconsistent data formats, greatly reducing manual intervention.

This flexibility gives the HIE an always-on data interpreter. Instead of rejecting unexpected inputs, AI can interpret, transform, and incorporate them through a more adaptive process, helping preserve valuable data that does not fit a predefined schema.

AI is shifting HIE integration from rigid, predefined mappings to adaptive pipelines that can interpret unfamiliar formats, detect schema drift, and standardize data in near real time. The result is faster onboarding of new sources, fewer dropped records, and more complete longitudinal patient data without months of custom integration work each time a feed changes.

Leveraging Previously Unused Data with AI

One major benefit is the ability to use data that would otherwise be ignored because of format mismatches, such as unstructured PDFs from external networks or custom logs from home health devices.

Previously, if the system couldn’t parse it, that data might be set aside, leading to gaps in the patient’s record. AI models like GPT-4 or fine-tuned variants like FLAN-T5 are changing this. These models are capable of understanding and transforming inputs that don’t fit known models, acting as AI health data models that can generalize across inconsistent sources.

They provide a way to ingest infrequent, uncommon, or unpredictable data types that were not anticipated during system design.

Consider GPT-based agents that can read a document and output structured data. If a new IoT health monitor provides a data dump in a novel JSON structure, an AI agent can be prompted with instructions to convert it to, say, a FHIR Observation resource.

Even without explicit programming, the model leverages its trained knowledge to map fields appropriately, essentially generating a schema mapping in real time, enabling AI in healthcare information exchange across previously “unmappable” inputs.

Fine-tuned LLMs can speed up healthcare data conversion, as shown by RapidFHIR, where FLAN-T5-small was used to generate FHIR resources from minimal input in seconds. Another study on health data interoperability found that one LLM could replace several specialized tools for turning clinical text into structured data.

In HIEs, this means notes, PDFs, device data, and legacy formats can be parsed into usable records instead of remaining “orphan” data.

Data exchange is only step 1.

Edenlab builds production-grade AI grounded in standardized clinical data and governed system behavior, so using AI in health information systems actually delivers operational value. Check our

Healthcare integration solution development pageAI for Deduplication, Normalization, and Data Quality

Beyond format conversion, HIEs struggle with data quality issues: duplicate records, inconsistent codes, and missing or erroneous values are common. AI is proving invaluable in tackling these challenges through intelligent deduplication, normalization, and validation processes that strengthen healthcare information exchange with AI end-to-end:

- Patient Identity Deduplication: A long-standing problem for HIEs is merging records that are about the same patient. Duplicate medical records are often caused by differences in names, IDs, or mistakes made when entering data. AI can improve the Master Patient Index (MPI) matching by using advanced fuzzy logic (a type of computation that lets systems make decisions based on inputs that are close, not exact, or unclear) and by learning from patterns of creating duplicates.

For instance, machine learning models can learn from past connections to better match nicknames, address changes, or typos. AI text recognition can even help find small duplicates when deduplicating content (removing repeated pages in record files). It’s interesting that most services that remove duplicates now use software and AI to do the cleaning, but they still need a person to check on more complicated cases.

An AI might flag two records as likely the same person by recognizing that “Bob Smith at 123 Main St” and “Robert Smith at 123 Main Street” are a match, where a simple deterministic algorithm might fail. By reducing duplicate entries, HIEs ensure that each patient has one complete, unified record, improving care quality and analytics.

- Data Normalization (Codes and Units): Healthcare data is coded in different ways. For example, one hospital might use local lab codes, while another might use LOINC. In one system, medications might be free-text, while in another, they might be coded in RxNorm. AI helps put these words into a common language. More and more modern HIE platforms have AI-assisted terminology services that can translate or normalize codes on the fly.

- Intelligent Data Cleaning and Imputation: AI can improve data quality by filling in gaps and validating values. Imputation engines powered by machine learning can infer missing values by looking at patterns across the HIE. For example, if one hospital encounter record is missing a diagnosis code that usually accompanies a certain procedure, the engine might fill it from context or other sources.

- Anomaly Detection and Validation: To keep an HIE of high quality, you need to constantly check the data that comes in. AI is great at recognizing patterns, so it can learn what “normal” data looks like for each source and flag anything that doesn’t fit. An AI validation layer might notice that a certain lab result usually falls within a certain range. If a new message falls far outside of those ranges or formats, it sends an alert. AI-based verification can keep data safe by finding problems before they spread.

AI can also apply rules or even change the logic of transformations to fit schema changes. This proactive quality control makes sure that decisions about patient care and analytics are based on accurate, consistent data.

It’s important to remember that humans still need to be in charge. AI can make the grunt work of deduplication and normalization a lot easier, but experts should still be in charge, especially for edge cases. The best results come from combining AI’s speed with human expertise. Overall, AI is enabling HIEs to reach new heights of data quality: more complete, correct, and standardized information that leads to better clinical and business outcomes, reinforcing AI interoperability in healthcare as an operational capability.

Regulatory and Privacy Considerations

Integrating artificial intelligence into healthcare requires strict adherence to privacy, security, and regulatory standards, especially as organizations deploy AI in HIE systems for scale.

In the U.S., the HTI-1 Final Rule mandates transparency in clinical decision support algorithms, including clear documentation of logic and data sources, applying to any AI that influences patient care or alters clinical data. In the EU, the AI Act classifies healthcare AI as high-risk, requiring explainability, human oversight, and data quality controls for any system processing patient information.

One key concern is patient data privacy when using large AI models like GPT-4. Cloud-based models may violate HIPAA or GDPR unless organizations implement safeguards such as Business Associate Agreements (BAAs), on-premises deployments, or federated learning. De-identification remains critical: AI tools must remove direct identifiers before processing, and privacy filters should verify that re-identification risk is minimal.

Model training also raises compliance issues. Using patient data to train or fine-tune models may require consent under GDPR or go beyond HIPAA’s treatment-use allowances. Organizations are increasingly using de-identified or synthetic data to stay compliant.

Both HTI-1 and the EU AI Act emphasize explainability and auditability. If an AI modifies a patient record, e.g., infers a missing value or normalizes a code, those changes must be logged and traceable. AI governance policies should be in place to review model outputs, monitor risks, and ensure regulatory alignment.

When thoughtfully implemented, AI can strengthen privacy protections by improving data consistency, controlling access, and detecting anomalies. But success depends on designing systems that prioritize compliance, transparency, and trust from the start, which is increasingly central to smart health information exchange systems.

Conclusion

AI can improve how health information is exchanged and used by adding adaptability, automation, and learning to HIE data pipelines. It can help address long-standing challenges such as format incompatibilities, slow source onboarding, duplicate records, and incomplete data integration.

For healthcare CIOs and informatics leaders, AI should not be seen as a replacement for standards or governance, but as an enabler that supports them. It can handle much of the heavy lifting around data transformation and standardization, allowing experts to focus on oversight, governance, and innovation.

Early examples, from LLMs converting clinical notes into FHIR® to AI tools normalizing data across systems, show that AI-driven HIE is already becoming a near-term reality.

Still, successful implementation requires trust, transparency, privacy protection, output validation, and human oversight. Used responsibly, AI can help create more responsive HIE architectures that improve data quality, adapt to new data sources, and deliver richer, more timely information to healthcare stakeholders.

Ready to modernize your HIE with AI?

If you’re exploring AI in HIE systems, from semantic normalization to deduplication and real-time schema adaptation, we’ll help you define an architecture that’s secure, auditable, and implementation-ready.

FAQs

What types of healthcare organizations benefit most from AI-driven HIE solutions?

Organizations with lots of heterogeneous sources and high integration churn: multi-facility providers (hospital networks, clinics, labs/ACOs), payers with heavy payer-provider exchange, healthtech vendors/startups that need interoperability baked into their products, and national/regional programs running exchange at scale.

How does Edenlab ensure data privacy when implementing AI in HIE systems?

By designing privacy-first pipelines (pseudonymization, fine-grained access policies, audit trails) aligned with HIPAA/GDPR/ISO 27001, and by implementing security controls such as encryption/access control/audit logging/consent tooling, for large-scale exchange, Edenlab also describes separating personal and medical data via pseudonymization to reduce re-identification risk.

Can AI models be customized for specific national or regional healthcare regulations?

Yes, Edenlab positions delivery around compliance constraints (HIPAA/GDPR/ISO 27001 and national regulatory frameworks) and has experience with regulation-driven interoperability programs (e.g., ONC-related work and national-scale eHealth). Practically, this means tailoring governance, auditability, consent, and deployment/residency constraints alongside the AI layer.

How does AI impact the total cost of ownership for health information exchange systems?

It can reduce TCO by cutting manual mapping/ETL upkeep, speeding source onboarding, and lowering ongoing “integration firefighting,” but it can add costs (model operations, monitoring, validation, governance). Edenlab explicitly emphasizes cost efficiency via ready-made components and automation to accelerate delivery and reduce costs, which aligns with the “lower maintenance burden” side of the TCO equation.

Stay in touch

Subscribe to get insights from FHIR experts, new case studies, articles and announcements

Great!

Our team we’ll be glad to share our expertise with you via email