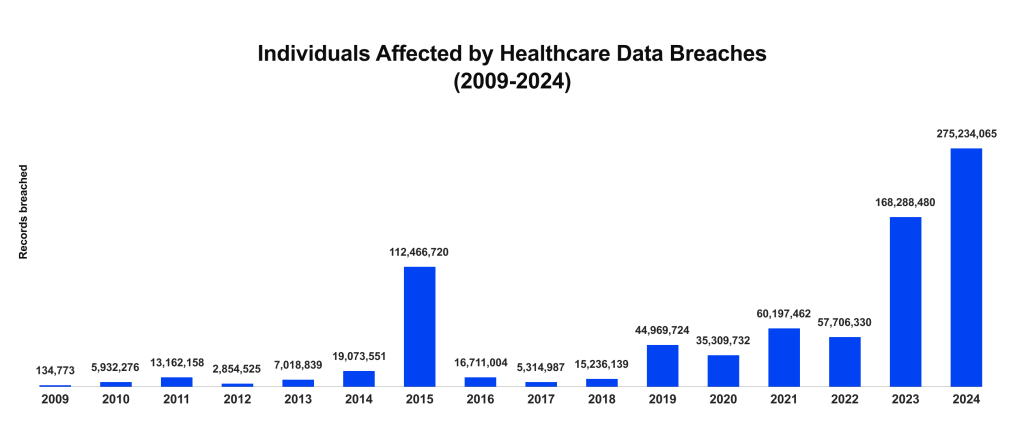

Most teams building new analytics platforms for big data in healthcare are now facing tough healthcare data compliance requirements, and the stakes are enormous. After a record-breaking 2024, U.S. healthcare breach exposure fell in 2025: OCR-based reporting shows about 61.6 million affected individuals across 710 large healthcare breaches, down from 275 million individuals in 2024.

Effective health data privacy is no longer just a legal requirement but a core pillar of product development. Analytics products and data platforms fall under general data-protection and sector privacy laws. For instance, in the EU, the European Health Data Space directly regulates secondary use of health data for analytics. Let’s review the main laws that define how health data is used, shared, and secured.

- European Health Data Space (EHDS) sets rules for primary and secondary use of health data, including permits, access bodies, and data altruism, and defines technical and security controls for data holders and users.

- General Data Protection Regulation (GDPR) applies to lawful bases, impact assessments, data-subject rights, international transfers, and security.

- EU Artificial Intelligence Act (AI Act) regulates risk-based duties for AI systems, including data governance, transparency, and human oversight.

- Health Insurance Portability and Accountability Act (HIPAA) establishes a baseline for privacy, security, and breach notification of protected health information.

- Health Information Technology for Economic and Clinical Health Act (HITECH) strengthens HIPAA and breach notification; promotes electronic health record adoption.

- Brazilian General Data Protection Law (LGPD) creates national authority oversight, sets security duties, and data-subject rights.

- Saudi Personal Data Protection Law (PDPL) requires consent and security that governs cross-border transfers and breach notification.

Analytics is no longer a closed environment but an integral part of healthcare data flows. If your product collects or shares data with EHRs or payer infrastructures, you need to follow the access protocols and standards that govern those systems (most often through FHIR® APIs) to ensure proper data collection and return.

- Health Data, Technology, and Interoperability Rule (HTI-1, ONC, U.S.) updates certification; advances access, exchange, and transparency.

- Interoperability and Prior Authorization Final Rule (CMS-0057-F, U.S.) requires certain payers to expose FHIR APIs and share clinical/administrative data.

- Informationstechnische Systeme in Krankenhäusern (ISiK, Germany) mandates hospital interoperability profiles and FHIR-based APIs under gematik specifications.

- Interoperabler Datenaustausch durch Informationssysteme in der Pflege (ISiP, Germany) defines FHIR profiles for nursing and long-term care information systems.

- MedMij (Netherlands) sets a national framework for secure patient-to-provider exchange; provides FHIR guides for personal health apps.

This article breaks down what these privacy regulations in healthcare require in real terms, highlights the risks of falling short, and offers a clear path to building analytics systems that are both compliant and resilient in an evolving industry environment.

Highlights:

- In 2024, U.S. healthcare breaches exposed 275M records, over 80% of the population. In 2025, the figure dropped to at least 61.6 million individuals.

- In 2024, a healthcare data breach cost an average of $9.8M. In 2025, it decreased to $7.42M, still above the average.

- A cyberattack in New Zealand disrupted treatment for 350+ cancer patients, forcing urgent cancellations and transfers.

What Healthcare Data Privacy Laws Require

Creating an analytics platform means navigating a maze of healthcare privacy laws and frameworks. For start-ups and public health teams, the goal is to bake compliance into the product to earn user trust. Here, we break down what major healthcare data privacy regulations, like HIPAA (U.S.), GDPR (EU), and local counterparts such as Saudi Arabia’s PDPL or Brazil’s LGPD, demand.

- PHI (Protected Health Information)/PII (Personally Identifiable Information) handling. Collect only the health and personal information required for the specific purpose. Keep it accurate and secure, whether it is in structured formats like EHR records or unstructured formats such as scanned documents or images. The HIPAA privacy rule defines this as the “minimum necessary” standard, while GDPR refers to it as data minimization. Both of them intend to protect patient privacy and limit unnecessary data use, ensuring robust healthcare data protection.

- Patient consent. Before you use PHI for research or analytics outside a patient’s direct care, you need their informed consent. Under GDPR, consent has to be specific, documented, and simple for patients to withdraw. HIPAA allows limited exceptions for treatment, payment, and routine operations, but the same high level of data protection in healthcare is still required.

- Rights to access, correct, and delete. Patients have control over their health data. They can see it, correct it, and in some regions, have it deleted. That only works if your systems can retrieve the correct records quickly, update them without causing problems elsewhere, and keep a solid history of every change.

- Data storageand transmission. Data must be encrypted, whether stored or transmitted. In many regions, it can’t be transferred abroad without legal safeguards such as standard contractual clauses or an adequacy decision. Audit logs and multifactor authentication are now baseline requirements for maintaining privacy and security in healthcare and ensuring long-term health data privacy.

- Anonymization and pseudonymization. Under GDPR, pseudonymized data remains personal and needs safeguards. Under the European Health Data Space, secondary-use datasets should be anonymized by default, where only pseudonymization is possible, and permits and stricter controls apply. In the U.S., use HIPAA de-identification when data must be used or disclosed outside HIPAA’s scope. Brazil’s LGPD treats anonymized data as non-personal unless re-identification is reasonably possible. Saudi Arabia’s PDPL and its guidelines define anonymization/pseudonymisation and require the separation of keys.

- Connecting to external systems. If your analytics product or research project needs data from external EHRs or payer infrastructures, you must use the official access routes. Today, that usually means FHIR APIs and the SMART on FHIR framework, mandated by the 21st Century Cures Act. In practice, this ensures your platform can support HIPAA-compliant data exchange, securely collect the right data, return results back, and remain compliant with the same privacy rules that govern the source systems.

For those building and running healthcare analytics systems, best practices for securing health data shape system design, vendor choice, and responding to patients or regulators quickly.

Designing a platform from the ground up?

We are here to help. Check our

Healthcare Data Platform Development servicesRisks in Healthcare Analytics Without Strong Privacy Controls

While AI and ML can significantly enhance analytics in healthcare, they can, in the absence of robust privacy protections, cause significant harm. Addressing privacy and security concerns in healthcare early is vital. Here are the highest incidences of difficulties and their avoidance.

- Data leakage. Training on identifiable data can expose sensitive records. Use anonymized or pseudonymized datasets, add differential privacy, and run routine red-team tests to reduce exposure and improve patient data security.

- Missing audit trail. Without access logs, misuse and non-compliance go unseen. Enable immutable logs at database and API layers, schedule reviews, and set alerts so misuse doesn’t slip through.

- Weak anonymization. Small cohorts or rare conditions can re-identify people. Strengthen anonymization or pseudonymization and test against re-identification before release to prevent it.

- Unchecked secondary use. Data collected for care gets reused without a lawful basis. Enforce granular consent, verify permissions for each use, and gate access through APIs to keep use within the stated purpose.

Cost of Non-Compliance

The importance of data protection healthcare strategies goes far beyond meeting regulations. Failing to protect it can cost organizations millions and permanently weaken patient and partner trust.

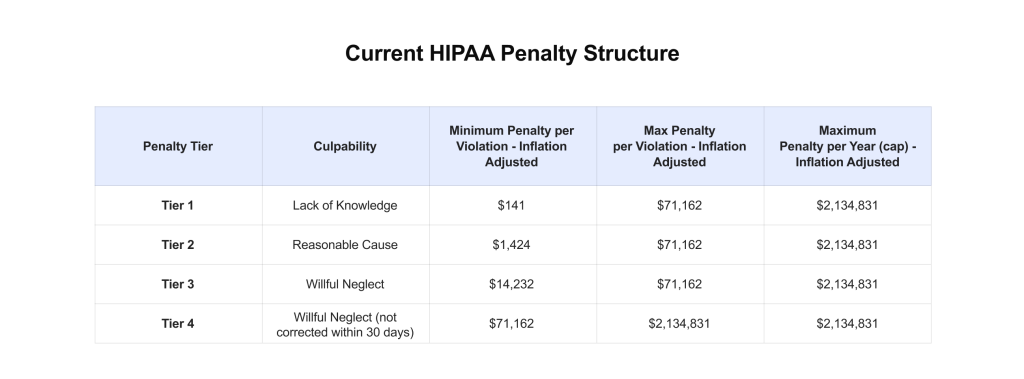

- Legal penalties. HIPAA fines can reach $2 million per violation annually, with criminal penalties of up to $250,000 and ten years in jail.

- Direct financial losses. Healthcare remains the industry with the most expensive data breaches. In 2024, the average breach cost soared to nearly $9.8 million. In 2025, it dropped to $7.42 million, but that still sits well above the $4.44 million global average across all industries.

- Reputation and trust. Once a breach happens, its damage extends well beyond dollars. Trust breaks down quickly for patients, partners, and investors. In one notable incident at New Zealand’s largest medical center, the cyberattack interrupted radiation treatments for over 350 patients, forcing hospitals to cancel, delay, or move treatment sessions and requiring care teams to organize with alternative facilities immediately.

Despite the technical risks and costly consequences we’ve covered, there are proactive ways to build analytical data platforms that are both cutting-edge and compliant. For instance, we partnered with a U.S.-based vendor to develop an AI-powered analytics platform tailored for clinical and research teams, particularly in stem cell research.

Designed with privacy in mind from day one, it features a graph-based backend and natural language interface, all built on a compliance-ready architecture. The platform helps users identify data quality issues and explore patient cohorts securely, and it’s already shaping the next generation of agent-driven healthcare tools.

How to Collaborate with Commercial Partners Safely and Smartly

When research groups and companies, such as pharmaceutical companies, share analytics, the potential for breakthroughs is huge. But privacy can’t be an afterthought, and big data security in healthcare must be a priority. Taking off names and sending a spreadsheet isn’t enough. The entire process has to be built so that no one can trace the data back to real people.

In the U.S., HIPAA makes room for this kind of sharing, either with fully disidentified data or what’s called a “limited data set.” Both come with strict rules. You have to remove identifiers according to approved methods, like Safe Harbor or Expert Determination, and you need to show you’ve considered the risk of re-identification.

In Europe, the European Health Data Space (EHDS) kicked in on March 26, 2025. It lays out a formal system for using health data beyond its original purpose. Data has to be anonymized, shared only through regulated channels, and approved by a national Health Data Access Body (HDAB). The rules limit use to public or scientific goals, require secure environments, and ban any attempt to re-identify individuals.

In practice, a research team removes identifiers from trial data, requests access from the HDAB, and works inside a secure analytics environment. We use the same pattern in production with Edenlab’s Kodjin Analytics. It puts healthcare data into a FHIR-native format, prepared for advanced analytics and supported by an agentic LLM. This allows clinicians and managers to work with records, outcomes, and population health trends, knowing the data is reliable, organized, and ready when needed.

Privacy-First Architecture for Healthcare Analytics

A privacy-first architecture for healthcare analytics starts with design choices that reduce exposure from the ground up. Stateless components process information without keeping it longer than necessary, and consent-aware systems check permissions before every query, export, or model run. API-level access control limits access at the integration layer, so even internal services only get the data they’re cleared to use.

No single tool can fully solve all privacy issues in healthcare. Effective healthcare data security solutions rely on multiple layers that work together while the data is stored, moved, and analyzed:

- Encryption locks information into unreadable code, so without the right key, it’s just noise.

- Tokenization replaces things like patient IDs with random placeholders. The system can still run analytics on those placeholders, but the real values stay hidden in a secure vault.

- Anonymization takes out anything that might lead back to an individual. Pseudonymization masks those details but keeps a tightly guarded key for rare, approved cases when re-identification is needed, such as a follow-up study.

FHIR makes these protections easier to manage across different systems. It structures information with consistent metadata, so things like consent tracking, audit logs, and role-based access work the same way everywhere.

At Edenlab, we’ve implemented FHIR in some of the world’s largest interoperability projects, from national health information exchanges to complex payer–provider integrations, and have built FHIR-native products such as our Kodjin Data Platform. This experience means we know how to translate the standard’s theoretical benefits into real-world results: mapping legacy formats without performance loss, aligning with regulations and other frameworks, and embedding the right privacy-first mechanisms from the start.

For instance, we recently partnered with Elation Health in the U.S. to build a FHIR-compliant backend that bridged their existing EHR infrastructure to the 21st Century Cures Act’s API standards, enabling Elation to achieve ONC certification efficiently without a complete system redesign, by deploying a custom clinical-data mapper powered by the Kodjin FHIR Server and aligning with the ONC certification requirements.

Read also:

Strategies for Overcoming Interoperability Challenges in Medium Healthcare Organizations

Read the case studyGlobal Trends and Upcoming Healthcare AI Data Privacy Regulations

The EU expects more regulatory updates. For instance, rigorous enforcement of GDPR rules on secondary use of health data, along with the rollout of EU-wide frameworks like the European Health Data Space (EHDS). The AI Act will also add obligations for analytics tools that use AI. The AI Act sets staged duties: bans and AI-literacy from February 2, 2025, GPAI duties from August 2, 2025, most high-risk rules from August 2, 2026, and AI embedded in regulated products until August 2, 2027.

For healthcare analytics, treat AI in SaMD/CDSS as high-risk and map Annex III uses like emergency triage or insurance scoring. High-risk systems must prove risk management and data governance, keep logs and technical files, enable human oversight, and meet robustness, cybersecurity, and accuracy thresholds.

Organizations should strengthen Data Protection Impact Assessments (DPIAs), adopt consent management that works across systems, and prepare standard contracts to support cross-border data sharing.

In the U.S., TEFCA enables nationwide exchange and auditing. HIPAA remains the privacy and security baseline. HITECH adds breach-notification duties. Together, they require clear lineage, immutable audit logs, strong de-identification, and secure APIs to ensure medical data security and maintain data privacy in healthcare.

Across the Middle East and North Africa (MENA) and in Latin America (LATAM), healthcare data privacy regulations in 2025 are getting closer to GDPR. As examples, both the Personal Data Protection Law (PDPL) of Saudi Arabia and the Brazilian Data Protection Law impose stricter cross-border transfer rules, increase penalties, and, in some cases, require data to be stored within national borders.

If your setup uses modular privacy controls, you are free to modify transfer modes and procedures without tearing everything apart merely in order to be compliant in one country.

Building a Compliance Roadmap for Your Analytics

Bring your analytics into healthcare data privacy compliance with a clear, repeatable plan. Anchor it in privacy‑by‑design, measurable controls, and data security standards that regulators recognize.

- Step 1: Audit and gap analysis

- Step 2: Architecture and policy design

- Step 3: Control implementation and testing

- Step 4: Monitoring and continuous assurance.

Struggling with complex healthcare regulations?

Edenlab helps you meet ONC, CMS, GDPR, EHDS, and more with tailored, compliance-ready solutions. Check our

Healthcare data exchange compliance and regulatory-ready software development solutionsOnce you’ve set up your core health data privacy controls, the next step is making sure they work everywhere across every data source and application in your analytics setup. That’s where FHIR is worth its weight. By unifying formats and metadata, FHIR removes much of the friction in applying the same consent rules, audit logging, and role-based permissions across disparate systems.

FHIR by itself doesn’t guarantee compliance, but it provides the framework to get there. With its resources and implementation guides, you can track consent, control access, and maintain full audit trails. That way, a patient’s privacy choices stay with their data, whether it moves between EHRs, claims systems, research platforms, or public health registries.

At Edenlab, we’ve implemented FHIR-native architectures at national and enterprise scale. Our Kodjin platform accelerates the process with ready-made, standards-aligned modules for secure data sharing, consent management, and compliance monitoring. So you can apply medical data privacy controls uniformly while meeting regulatory mandates across multiple jurisdictions.

Need expert guidance on achieving healthcare regulations compliance?

We are here to help. Check our

Healthcare IT consulting servicesWhy Edenlab Is Your Reliable Strategic Partner

We design and deliver analytics platforms tailored to healthcare start-ups and public health research teams. Whether it’s a complete analytics stack or focused support with data preparation, modeling, or semantic layers, our team brings deep expertise and a strong track record.

Our work ranges from commercial products to national health data systems. With experience building platforms like Kodjin and other large-scale solutions, we know how to deliver in highly regulated environments. Our strength lies in semantic interoperability and health information privacy compliance, with hands-on knowledge of standards such as SMART on FHIR, ensuring data is both usable and secure.

As your strategic consulting partner, we build proofs of concept that are fully aligned with your use case and the regulatory requirements that matter in the U.S., EU, or beyond.

In one standout collaboration in the U.S., Edenlab partnered with Zoadigm, a leader in healthcare-augmented intelligence and provider network coordination, to build a FHIR-based analytics platform that tackles the perennial challenge of fragmented health data.

By consolidating disparate electronic health record sources, Edenlab delivered a scalable, cloud-agnostic microservices architecture. The platform’s semantic FHIR layer enables clinicians and payers to define complex, multidimensional patient cohorts, outline treatment pathways, and model payment data through intuitive visual tools. All this is protected with HIPAA-compliant processes, ensuring powerful insights and strict privacy standards.

Conclusion

Building a healthcare analytics platform today means working within a framework of trust, security, and compliance. Regulations are becoming more demanding, and falling short can cost far more than fines. It can disrupt care, damage partnerships, and erode patient confidence.

Innovation and data privacy in healthcare don’t have to conflict. Meeting HIPAA, GDPR, EHDS, and other regulations may be accomplished quickly and efficiently with the correct architecture, governance, and technological foundations.

At Edenlab, we build compliance into every stage, from early design to launch, for projects ranging from start-up platforms to national health systems and cross-border research networks. Our FHIR-native platforms and privacy-first design ensure data is protected, patient rights are respected, and your systems stay prepared for what’s next.

If you want to get the most from your healthcare data without sacrificing trust or speed, we can help you make it happen securely, compliantly, and with confidence.

Build healthcare analytics that put privacy first

We design analytics platforms with compliance built in: encrypted pipelines, consent-aware workflows, and audit-ready infrastructure that protect patient trust while keeping you ready for growth. If you want privacy and innovation to work hand in hand, we can help you make it real.

FAQs

Can analytics dashboards expose protected health data accidentally?

They can if patient data privacy controls are weak. A dashboard that queries raw records may show identifiers or sensitive notes. The solution is to require role-based access, use disidentified datasets, and apply privacy filters prior to data visualization.

What’s the difference between anonymization and pseudonymization?

When you anonymize data, you take away all the information that may be used to identify a person. This means that the data cannot be linked back. Pseudonymization replaces identifiers with codes, although the original link can be restored if there is a valid legal reason.

How do I manage patient consent for secondary data use?

Consent must be clear, documented, and easy to withdraw. The ideal method is to incorporate consent checks into the system so that each new use of data is automatically verified.

Are LLM-based analytics tools HIPAA-compliant?

Not on their own. They only comply with HIPAA regulations if they are operated in safe, legal settings, employ disidentified data wherever feasible, and have safeguards in place to prevent the disclosure of private information.

How do audit logs support GDPR and HIPAA compliance?

Audit logs document who accessed information, when, and how it was used. They provide regulators with evidence of potential misuse and allow organizations to see any abuse.

Stay in touch

Subscribe to get insights from FHIR experts, new case studies, articles and announcements

Great!

Our team we’ll be glad to share our expertise with you via email