AI is everywhere in healthcare right now, not just in decks and demos, but in real hospital workflows. In 2024, 71% of U.S. hospitals reported using predictive AI, up from 66% in 2023. That’s why AI in healthcare regulation is now a business-level topic, not legal theory.

While there is no single all-encompassing “AI law” for medicine, government regulations on artificial intelligence in healthcare do exist, woven into the fabric of longstanding health laws and overseen by multiple agencies.

In this article, we explain how AI in U.S. healthcare is regulated today, which authorities are involved, and why it matters for your business strategy and product design.

In 2024, 71% of U.S. hospitals reported using predictive AI (up from 66% in 2023). Regulation is now a business topic, not legal theory.

Why care about AI regulations in healthcare? This guide will provide:

- A clear overview of U.S. AI regulations in healthcare: how current laws (FDA, HIPAA, etc.) apply to AI and what new rules are emerging.

- Clarity on who regulates what in health AI (FDA for devices, HHS for data, FTC for fairness, and more) and why it matters commercially.

- Insight into how regulation shapes AI business strategy, from product design and timelines to adoption and investment risk.

- Perspective on using regulation as a design constraint that can drive innovation (rather than kill it), helping build trust and scalable solutions.

- Foresight into the future: how today’s AI policy updates and pilot programs are likely to solidify into more explicit rules, giving businesses more stability (not less).

By the end, you should feel confident that pursuing AI in healthcare is possible and scalable, not blocked by law, but achieved by smart navigation of the rules.

Is AI in Healthcare Actually Regulated in the U.S.?

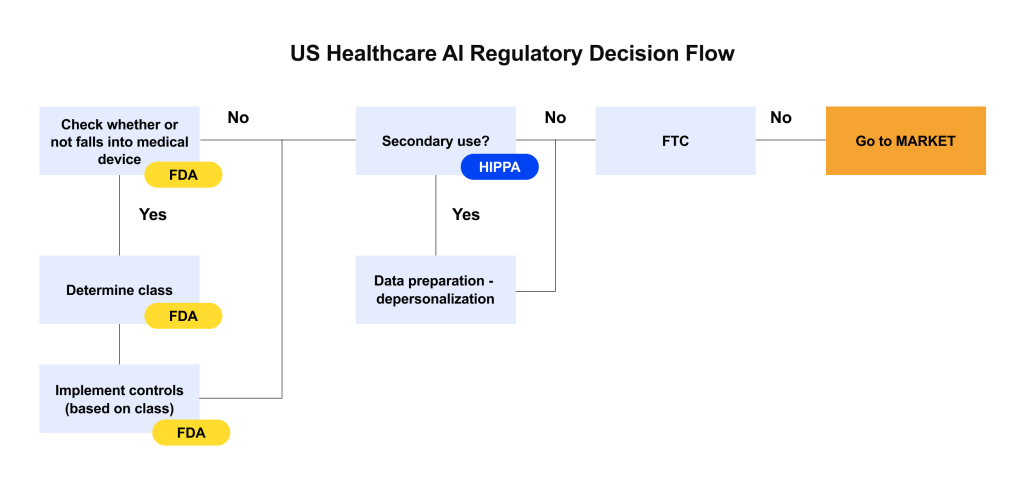

No federal “AI in healthcare” law exists, unlike in other areas. In the U.S., a patchwork of healthcare legislation and authorities indirectly regulates AI. This means that AI in healthcare is regulated by interpreting current rules.

As of 2026, Congress had not passed any comprehensive AI healthcare law. Many proposals focus on voluntary guidelines to avoid stifling innovation. AI-related regulations are being updated by government agencies.

AI tools are evaluated by the FDA under its medical device framework, and in 2016, the 21st Century Cures Act exempted low-risk clinical software that supports physician choices from FDA monitoring. (In 2022, the FDA specified which AI-driven decision support tools are exempt and which are devices, especially those with complex, non-transparent algorithms.)

AI managing protected health information must comply with HIPAA just like a person, although HIPAA doesn’t include AI. The FTC warns that unfair or deceptive AI use violates consumer protection laws.

Leaders must realize that AI isn’t lawless in healthcare, but neither is it prohibited. Nothing says “anything goes,” even if there isn’t an AI-specific regulation; you must map your AI product to current legal categories. Understanding early various scenarios in which your product will be used helps you allocate time and resources and avoid costly pivots.

However, policy is changing rapidly. In late 2023 and 2024, the Office of the National Coordinator (ONC) issued a first-of-its-kind rule requiring algorithm transparency in certified health IT systems, and HHS released a 2025 AI Strategy indicating prospective regulatory areas.

By 2026, federal oversight is becoming more structured, and keeping up with AI regulation news, the latest rules, and agency guidelines will be part of the job for healthcare innovators.

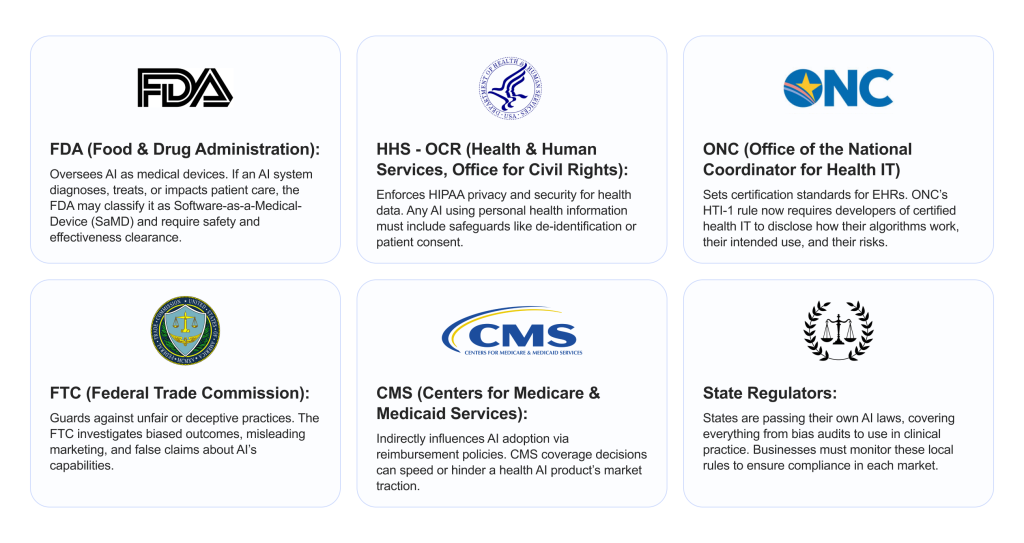

What Key U.S. Authorities Are Shaping Healthcare AI Oversight?

Multiple regulators govern how AI can be used in healthcare. Here are the key players and what each focuses on.

Let’s dive deeper into the top three authorities (FDA, HHS/HIPAA, FTC) and what they mean for healthcare AI.

FDA: AI as Medical Software

The FDA is the chief regulator for any AI that functions as a medical device. In the U.S., a device is not just hardware; software that diagnoses, advises, or treats patients can be regulated, too. The FDA has stated it will “regulate AI/ML software under its existing medical device framework” rather than create a separate AI law.

- When AI triggers FDA oversight: If your AI tool is intended for clinical use, it likely meets the definition of a medical device. The FDA then requires you to obtain clearance or approval before marketing it. On the other hand, an AI that’s purely administrative (say, scheduling or billing) or purely informational (like a wellness chatbot) might not be considered a regulated device.

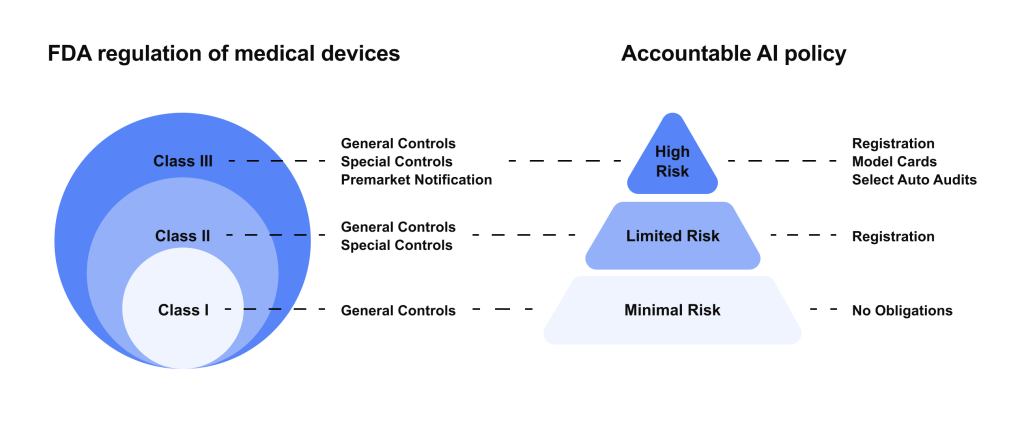

- Risk-based classification: The FDA uses a tiered risk approach.

- Class I (low risk) devices are mostly exempt from review (few AI tools fall here, usually simple wellness apps).

- Class II (moderate risk) devices require a 510(k) premarket notification; most AI diagnostics and decision support tools end up here.

- Class III (high risk) devices (e.g., AI that directly administers therapy) need full Premarket Approval (PMA) with clinical trials.

IMAGE – redesign in edenlab style

- Adaptive AI and updates: One challenge is that AI models can learn and evolve after deployment. Traditionally, device approvals are for a fixed product. The FDA has been piloting approaches for “adaptive” AI, e.g., requiring Predetermined Change Control Plans and ongoing post-market monitoring. For now, if you significantly modify your algorithm’s logic, you may need a fresh clearance unless a change protocol was approved up front.

Examples of FDA-regulated AI: By early 2025, the FDA had cleared or approved over 1,000 AI-enabled medical devices, from imaging diagnostics to EKG analysis tools. In fact,the pace is accelerating; more than half of the AI devices cleared in one study had been cleared in just the last year of the review period. This surge indicates both growing innovation and the FDA’s increasing comfort in evaluating AI.

HHS & HIPAA: AI and Health Data

Healthcare AI feeds on data, often lots of data about patients. In the U.S., the privacy and security of personal health information are protected by laws like HIPAA (Health Insurance Portability and Accountability Act).

Here’s how privacy regulations shape AI:

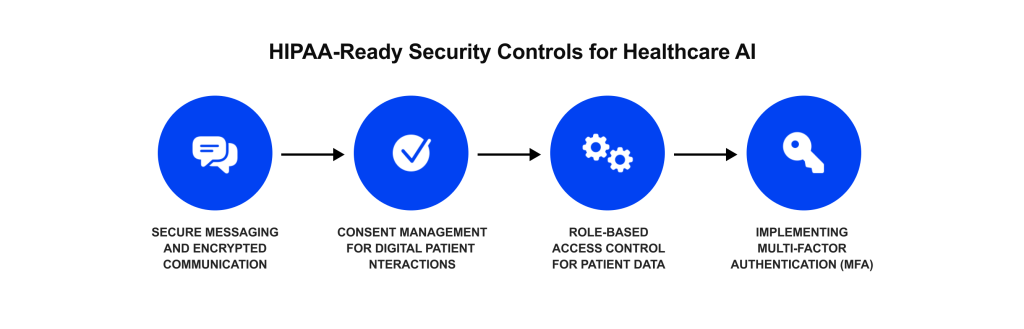

- HIPAA compliance is mandatory: Any AI solution that involves protected health information (PHI) from hospitals, clinics, insurers, etc. must follow HIPAA rules. This means if you’re a healthcare provider or a business associate (e.g., a vendor processing data on a provider’s behalf), you need safeguards like encryption, access controls, audit logs, and patient consent where required.

- De-identification and secondary use: If you want to use patient data to train AI models (especially large datasets), HIPAA strongly encourages de-identifying it. Proper de-identification can allow data use without individual authorizations. However, de-identification must be done carefully to avoid re-identification risks.

- Patient rights and transparency: Patients have rights to their data under HIPAA (and further, under laws like the 21st Century Cures Act’s information blocking rules). There’s a growing expectation that if AI is involved in someone’s care, patients should be informed. HHS has hinted at algorithmic transparency as an ethical goal. In fact, some suggest patients may have a right to know when AI is being used in their care decisions. While not yet a formal HIPAA requirement, being transparent can build trust.

- Bias and fairness with data: HHS’s 2025 AI Strategic Plan flagged concerns about AI bias and called out the need for careful governance. If your AI is trained on biased data and produces unfair outcomes, you not only risk patient harm but also potential regulatory scrutiny. Ensuring your training data is diverse and your model is evaluated for performance across demographics isn’t just good practice; it could help preempt discrimination complaints.

FTC: AI, Trust, and Market Accountability

The Federal Trade Commission might not be a health agency, but it plays a vital role in AI oversight as a consumer protection and antitrust regulator. The FTC’s interest is ensuring companies tell the truth about AI and don’t harm consumers with it.

In healthcare, where AI tools may be sold to providers or even directly to consumers (think health apps, symptom-checker chatbots, wearables with “AI-powered” health insights), the FTC is watching for snake oil and bias:

- Truthful marketing: If you advertise that your AI can do something—diagnose disease, personalize therapy, cut healthcare costs—you must have solid evidence. The FTC has cautioned that unsubstantiated claims about AI could be considered deceptive. Nor should companies overuse buzzwords without substance, as this could mislead customers or investors. In 2023, the FTC even warned businesses that simply labeling a product “AI” without real AI, or implying capabilities beyond reality, is actionable.

- Avoiding bias and discrimination: The FTC has explicitly stated that deploying AI that results in biased outcomes can be an “unfair” practice. In one policy statement, they noted it may violate the law to use AI that “has discriminatory impacts” or to use it without mitigating known biases. In healthcare, this could translate to liability if, say, an AI system consistently undertreats or misdiagnoses a certain racial group due to training bias. Even if unintentional, such outcomes could fall under unfair practices affecting consumers.

- Data security and misuse: The FTC also enforces data protection in a broad sense. If a company’s poor security leads to a breach of health data, the FTC can take action for failing to protect consumer information. Likewise, using data in ways customers didn’t expect or consent to can land you in trouble.

- Emerging AI-specific rules: While no separate “FDA of algorithms” exists yet, the FTC’s aggressive posture is effectively filling some gaps. The Commission has created an Office of Technology and is hiring data scientists to guide investigations. We’ve already seen enforcement outside healthcare, e.g., against companies using biased hiring algorithms or lax facial recognition data practices. It’s reasonable to expect health tech could be next if egregious cases arise.

How Current Regulations Shape Healthcare AI Business Models

Regulation isn’t just a legal box to check; it directly influences how you design, deliver, and scale an AI-driven healthcare product.

Knowing the regulatory requirements often dictates what your product will and won’t do. For example, consider an AI symptom checker. If it simply offers generic health information, it might avoid FDA device status.

But if you want it to provide personalized diagnoses or treatment advice, you’re entering FDA territory and possibly a higher risk classification. Many companies choose to start with a narrower, lower-risk feature set to sidestep heavy regulation initially, then expand features once they have more resources for compliance.

Regulations like HIPAA can influence your architecture. If privacy concerns are paramount, a business might offer an on-premises deployment: installing the AI software in the hospital’s own IT environment so patient data never leaves their firewall.

This can alleviate compliance hurdles, but it affects your revenue model. Some AI vendors use a hybrid approach: sensitive processing happens locally, while de-identified analytics are sent to the cloud for model improvement, thus balancing compliance and product improvement. Regulations effectively push you to consider these models instead of defaulting to “send everything to our server.”

Healthcare is already notorious for long sales cycles. Add regulatory approvals, and it can become even longer. A Class II medical AI that requires FDA clearance can’t generate revenue until it’s cleared, which might take 6-12 months or more. This affects budgeting and pricing. On the adoption side, trust and liability fears mean hospitals and clinicians might be slow to adopt AI unless they’re confident it meets regulations. This is why many providers currently only pilot AI tools under controlled conditions.

The regulatory maze is spurring a new kind of team structure in HealthTech startups. It’s not enough to have data scientists and engineers; companies are hiring clinical experts, regulatory consultants, and compliance officers earlier in their growth.

From a business standpoint, this is an added cost – but one that can save the company from missteps. Incorporating regulatory strategy into the product roadmap from the start can prevent scenarios like building a great algorithm only to find out it triggers a compliance red flag that delays deployment for a year.

Finally, regulatory factors influence who you partner with and your funding strategy. A common pattern is HealthTech startups partnering with larger incumbents (like medical device companies or EHR vendors) to navigate regulations together.

On the investment side, there’s a balance: while some investors shy away from heavily regulated products (due to longer time to market), others specialize in it and view regulatory approval as a moat (once you clear the FDA, you have a barrier against smaller entrants).

So, companies are tailoring their pitches: if your AI is regulated, you highlight the rigorous process as a strength. If your AI is intentionally unregulated, you emphasize speed and market uptake. Both approaches can attract funding if framed correctly in light of the regulatory environment.

Regulation as a Design Constraint, Not an Innovation Killer

Regulation can feel like a drag on speed, but in healthcare, it usually works like a design constraint that makes products stronger. Highly regulated industries still innovate fast because safety, validation, and transparency force maturity early, and the same logic applies to health AI.

If you know your model may need to satisfy FDA expectations or ONC transparency rules, you build explainability, documentation, and testing into the product from day one, which results in more reliable performance in the real world. That rigor also becomes a trust signal: hospitals buy what they can defend, secure, and validate, so compliance-ready AI often passes procurement faster than black-box tools.

Clear rules also reduce chaos across the market by setting shared baselines (what needs to be documented, how bias is evaluated), which makes partnerships and adoption easier. And because regulation is only getting more explicit, building responsibly now is the cheapest way to future-proof; it’s much harder to retrofit auditability, consent, and governance after you’ve already shipped. In practice, the teams that treat compliance as part of product quality end up moving faster long-term because fewer things break once the AI hits real clinical environments.

What Healthcare AI Regulation Is Likely to Mean Going Forward

Looking ahead, healthcare AI regulation in the U.S. is poised to get more explicit and comprehensive, but that’s not a bad thing. Greater clarity can actually reduce uncertainty for businesses.

Thus far, much of AI oversight has been through guidance documents, voluntary frameworks, or applying old laws to new tech. We’re likely to see dedicated regulations or amendments that address AI directly.

The White House’s 2025 “AI Action Plan” signaled an intent to accelerate AI innovation but also to establish an “AI Evaluation Ecosystem” for rigorous assessment. And although one administration might favor light-touch regulation and another stricter oversight, the long-term trend is toward codifying best practices into law. For businesses, this means today’s best practices could become tomorrow’s requirements.

In the near term, companies face a patchwork of state rules and uncertainty over federal direction. However, there’s a strong push to avoid 50 different regimes. We might see federal preemption in AI (where a federal law sets one standard, overriding state-by-state differences). In late 2025, for instance, there were moves to block overly restrictive state AI laws to maintain a unified approach. Over the next few years, expect harmonization efforts, both within the U.S. and internationally.

The FDA already collaborates with international bodies (IMDRF) on AI device standards, and the U.S. will watch the EU’s AI Act implementation closely. For businesses, this could mean that by, say, 2027, you have a clearer checklist of “must-dos” for AI safety and ethics that apply across the board. While that might feel like more regulation, it also provides confidence. It’s easier to invest in a new AI feature when you know the exact rules it must follow, rather than guessing and risking rework.

It’s likely that future regulations will heavily emphasize transparency, validation, and oversight of AI. We can anticipate requirements such as documenting your training data provenance, regularly reporting real-world performance (post-market surveillance for AI), enabling external audits of algorithms, and giving users (doctors or patients) an explanation of AI decisions.

The groundwork is visible now: ONC’s rule on algorithm transparency, the FDA’s insistence on clearer labeling of AI device indications and limitations, and the FTC’s stance on openness about AI use. Therefore, baking in auditability now is wise.

Companies that build ethical safeguards (like monitoring for drift or having a human-in-the-loop for certain AI decisions) will align well with a likely future where human accountability is required alongside AI.

Importantly, no one expects the government to ban health AI or anything drastic. Quite the opposite: HHS, FDA, and others are actively encouraging AI innovation. The message from regulators is “Innovate, but do so responsibly.” Businesses should not fear that investing in an AI product will be wasted because “regulators might shut it down.”

If you’ve done the due diligence, it’s far more likely that regulation will legitimize your product. The goal is not to avoid regulation, but to help shape it and comply with it so that your innovations can scale with public and regulatory trust.

Conclusion

AI is undeniably transforming healthcare, and U.S. regulators are working to both enable that transformation and ensure it happens safely and ethically. For healthcare and HealthTech leaders, the key takeaway is that AI in healthcare is regulated, but not blocked.

There may not be one unified AI law, but through FDA device rules, HIPAA, ONC’s standards, FTC oversight, and more, a de facto framework is in place. Navigating it is now part of doing business in digital health.

The good news is that understanding and embracing these regulations will put you ahead. It forces you to clarify your product’s purpose, build with quality, protect patient data, and be transparent, all ingredients of long-term success in the healthcare market.

Regulations shape timelines and strategies, yes, but they also build the foundation of trust that health innovations need. As we’ve seen, compliant design can be a springboard rather than a straitjacket, leading to more robust and widely accepted AI solutions.

Edenlab believes that with the right strategy, AI can thrive under healthcare regulations, driving innovation that is not only exciting but safe, effective, and aligned with clinical needs. The regulatory environment is there to guide us in delivering AI solutions that truly improve patient care without compromising ethics or trust.

By treating those guardrails as part of the creative challenge, healthcare leaders can steer their AI initiatives to scalable success. After all, in healthcare, doing it right is just as important as doing it fast. And now you, as a business leader, have the knowledge to do both.

Building healthcare AI that can scale under regulation?

We help you enable datasets for AI, design and orchestrate agentic layers, and bake regulatory compliance into the product from day one so you avoid costly rework later.

FAQs

What’s the biggest business risk related to healthcare AI regulation?

Building and launching the “wrong” product category (clinical vs. non-clinical, PHI vs. non-PHI, high-risk vs. low-risk) and then discovering later that you need different evidence, controls, or approvals, causing delays, rework, lost deals, and liability exposure.

Why should companies consider working with specialized AI developers for healthcare products?

Because healthcare AI requires more than model performance: privacy/security, auditability, documentation, validation, integration into clinical workflows, and risk management. Specialists reduce costly missteps and shorten the path to adoption.

What role can a technical partner play in navigating healthcare AI regulations?

Translate regulatory expectations into concrete engineering requirements: data governance, traceability, logging, access controls, model monitoring/drift, explainability artifacts, testing/validation plans, and compliant deployment architecture (cloud/on-prem/hybrid).

Are there opportunities to gain a competitive advantage while staying compliant with healthcare AI regulations?

Yes. Compliance-by-design becomes a trust signal that speeds procurement, lowers customer risk, supports enterprise contracts, and creates a moat through strong documentation, transparency, and proven governance that competitors struggle to retrofit.

Stay in touch

Subscribe to get insights from FHIR experts, new case studies, articles and announcements

Great!

Our team we’ll be glad to share our expertise with you via email